Survey Highlights Infrastructure Transformation Occurring at the Edge of Network

The Transformation of the Edge

Edge computing refers to compute and storage that sits between centralized data centers and the users and devices that generate or consume data. It is often deployed as an alternative to cloud and central data centers, providing lower latency and data transmission costs compared to centralized resources. But edge computing is also a contributor to cloud computing growth. Edge sites can act as a staging-post for data that is ultimately sent to the cloud for processing, storage, or long-term analysis.

Industries such as education, financial services, and retail have long relied on local storage and computing to support their distributed operations. These industries now face the challenge of determining whether these legacy edge sites can meet the requirements of emerging edge use cases or need to be replaced or supplemented with purpose-built edge computing sites. Simultaneously, other industries must build out their edge of network to capitalize on the opportunity presented by new digital applications.

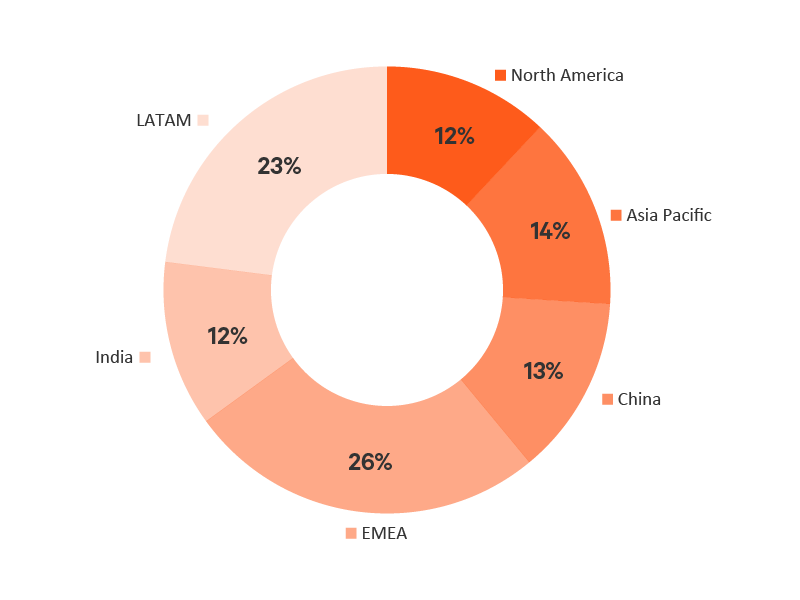

The Vertiv What’s Your Edge global survey collected input from 156 industry professionals on their current edge deployments and plans. Regions represented in the survey include Asia Pacific; China; Europe, Middle East and Africa (EMEA); India; Latin America (LATAM) and North America.

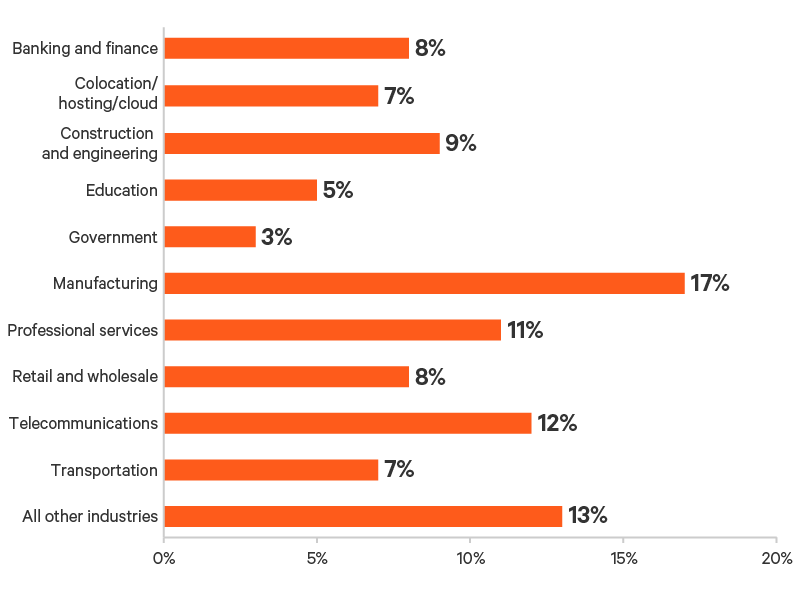

Key industries represented include banking and finance (8%), colocation/hosting/cloud (7%), engineering and construction (9%), education (5%), manufacturing (17%), professional services (11%), retail (8%), telecommunications (12%), and transportation (7%).

Defining the Edge

Legacy edge sites refer to distributed IT resources that have traditionally been deployed in remote offices and other business locations to enable local data processing and communications.

Purpose-built edge sites refer to sites being designed and deployed specifically to support edge use cases such as Industrial Internet of Things (IIoT) applications, autonomous robots, predictive analytics, and condition-based monitoring.

The Shift to Cloud and Edge

The more data being generated and consumed as a result of digitalization and the introduction of new applications, the more organizations will require both increased edge and cloud computing capacity.

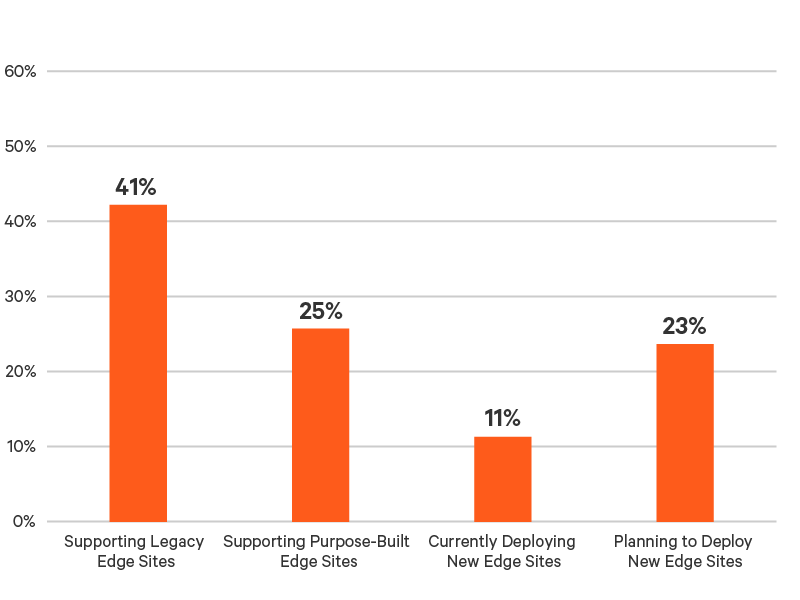

Figure 1: Percent of participants supporting, deploying, or planning to deploy edge sites.

Participants in EMEA and India were the most likely to be supporting legacy edge sites with about half (49% and 50%, respectively) of the edge activity in these two regions comprised of legacy sites. For all other regions, 37% of participants were supporting legacy edge sites.

Participants in LATAM and North America were the most likely to have already deployed purpose-built edge sites with about one-third (33% and 32%, respectively) of the edge activity in these regions comprising purpose-built edge sites. This is compared to 15% in China and 18% in India, the two regions with the lowest percentage of participants supporting purpose-built edge sites today.

China does, however, seem poised to close the gap. The region had the highest percentage of participants deploying or planning new edge sites at 48%. Asia Pacific was second at 37%. Regions with the smallest percentage of participants deploying or planning new edge sites were EMEA (28%) and LATAM (30%).

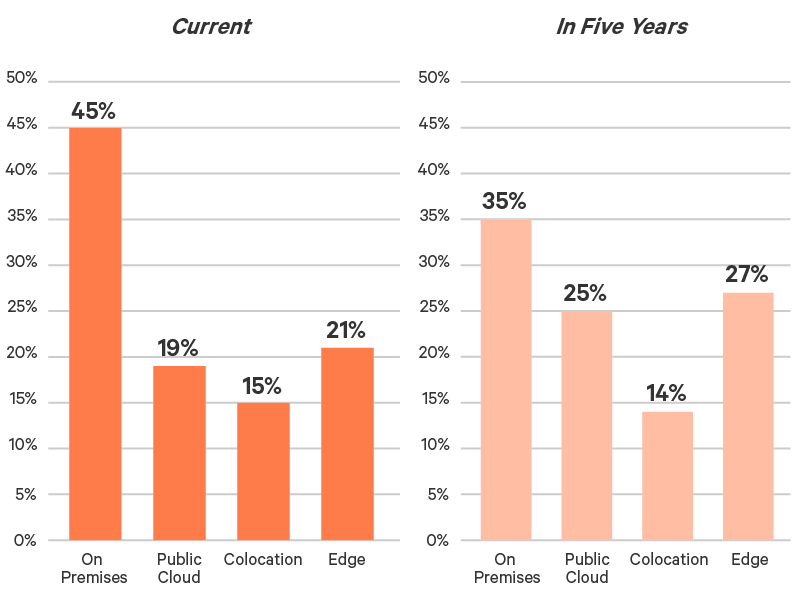

The influx of new edge sites will increase dependence on the network edge. Participants project the percent of IT infrastructure deployed on the edge to grow from the current 21% to 27% in five years. They expect the percent of centralized, on-premises IT infrastructure to decrease by 22%, while the percent of resources in the cloud increases by 32% and at the edge by 29% (Figure 2).

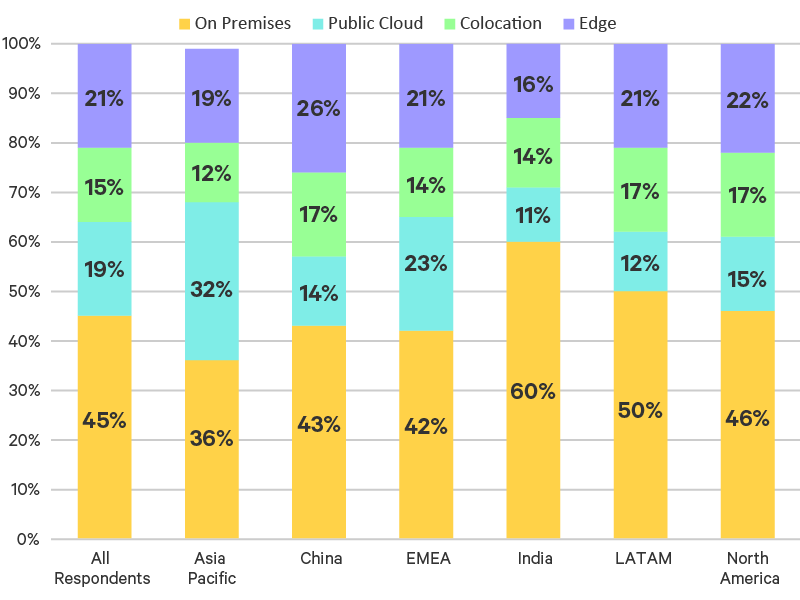

India and LATAM had the highest percentage of IT on premises today at 60% and 50%, respectively (Figure 3). Asia Pacific had the highest percentage of resources in the public cloud at 32%. China had the largest percentage of resources at the edge (26%). Five years from now, participants from China expect, on average, that the percent of IT infrastructure on the edge will grow to 31%. Participants from LATAM expect to see the biggest shift to the edge, going from 21% in 2021 to 30% in 2026.

Figure 2: Percent of IT resources deployed in different environments today and in five years.

Figure 3: Percent of IT resources deployed in different environments by region.

Inside Today’s Edge

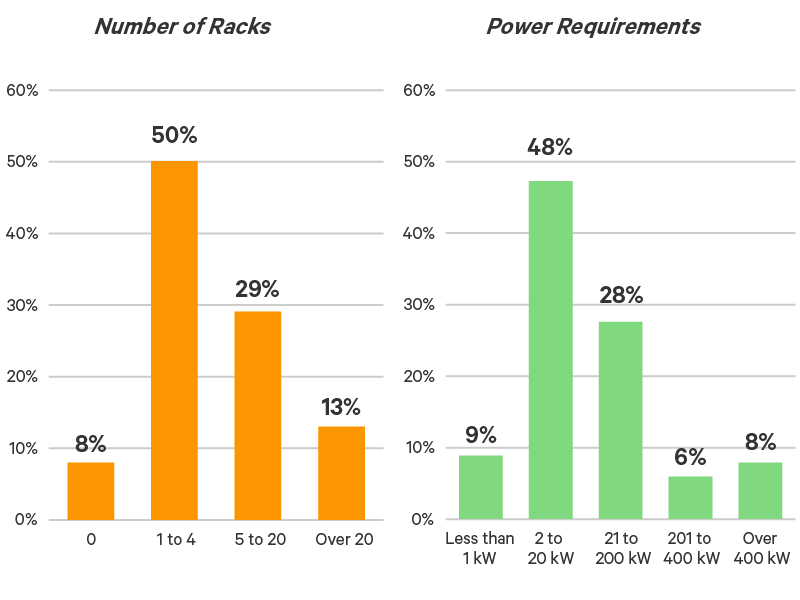

The “typical” edge site today houses between one and four IT equipment racks with power requirements between 2 and 20 kW, although larger sites are common. Forty-two percent of sites consisted of more than four IT equipment racks and had power requirements above 20 kW (Figure 4).

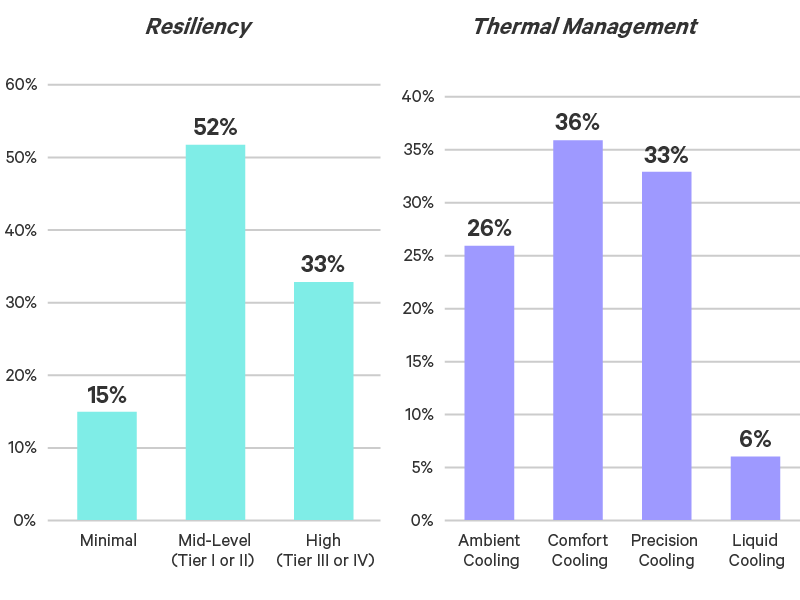

Legacy edge design practices seem to prevail in today’s landscape. More than half (52%) of participants are employing a level of resiliency comparable to that provided by Uptime Institute’s Tiers I or II. A third of participants (33%) are designing their edge sites to achieve uptime that compares to Tier III or Tier IV availability levels (Figure 5).

The tolerance for edge downtime evident in these resiliency levels is reinforced by decisions made around edge cooling and management.

- Only 39% of participants are using dedicated precision cooling systems to deal with heat generated by edge sites, even though more than 90% of sites are using at least 2 kW of power — a threshold at which dedicated precision cooling is recommended (Figure 5).

- In total, 6% are employing liquid cooling, indicating high-density edge computing environments are becoming more common.

- Similar vulnerabilities are evident in current edge management practices. A third of participants (33%) rely on IT staff located at or near edge facilities to support operations, a strategy that could create challenges as the number of edge deployments increase. An additional 25% support edge locations with centralized IT staff, requiring travel to remote sites to perform regular maintenance and troubleshoot. This can also strain IT staff as the number of sites grows and can significantly extend downtime if failures occur.

- A more scalable solution is remote access and monitoring of edge technologies by centralized IT sources, which is employed by 30% of participants today. An additional 12% outsource management of edge sites.

Figure 4: Number of racks and power requirements for current edge deployments.

Figure 5: Resiliency levels and thermal management strategies for current edge sites.

The Shift From Legacy to Purpose-Built Edge Sites

Emerging Edge Use Cases

Legacy edge sites were generally specified based on the power requirements of the IT load, regardless of the use case or application being supported. Today’s emerging use cases have more demanding requirements and must be configured not only to IT requirements but also based on the latency, bandwidth, availability, and security requirements of the use case.

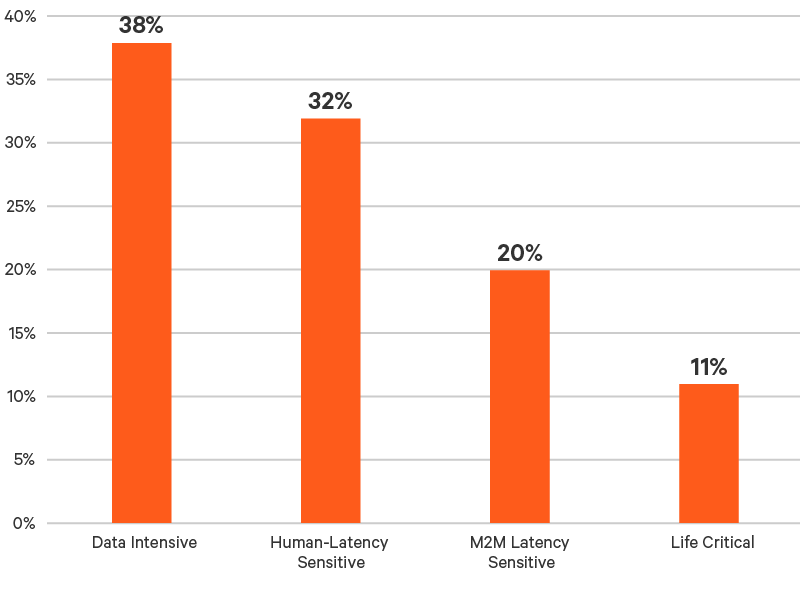

Vertiv has classified these use cases into four categories:

- Data Intensive edge use cases are those managing such a high volume of data that it is impractical to transfer it to the cloud, such as video streaming and IoT applications. With the explosion in data volumes over the previous five years, Data Intensive use cases are the most mature of the new generation of edge uses cases. More than a third of participants (38%) classified their edge investments as being driven by Data Intensive use cases (Figure 6).

- Human-Latency Sensitive use cases are those where latency issues can negatively impact humans’ experience with technology, such as virtual reality and natural language processing. This was the second most popular edge use case category, with 32% of participants saying their edge investments are being driven by Human-Latency Sensitive use cases.

- Machine-to-Machine Latency Sensitive use cases generally require even lower latency than Human-Latency Sensitive use cases, because of the speed at which machines can process data. Key use cases in this category include smart grid and smart security systems. One in five participants (20%) said their investments in edge technology were being driven by Machine-to-Machine Latency Sensitive use cases.

- Life Critical use cases are the most demanding — and generally the least mature — as they directly impact human health and safety. The best-known Life Critical uses cases are autonomous vehicles and robots and digital health applications. About one in 10 participants (11%) said their edge investments were driven by Life Critical use cases.

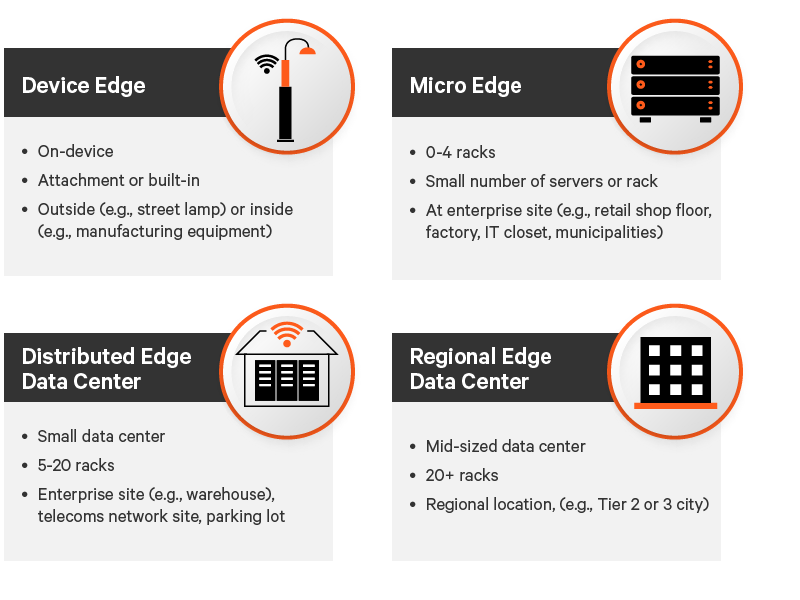

Edge Archetypes enable organizations to clarify their edge strategy as they help determine the edge data center models that are required by different use cases. Depending on the specific requirements of the use case, edge networks may include all or some of the edge computing models defined in the report Archetypes 2.0: Deployment-Ready Edge Infrastructure Models (Figure 7).

Figure 6: Percent of edge computing sites currently supporting each edge archetype.

Figure 7: Edge computing models.

Edge Archetypes 2.0: Deployment-Ready Edge Infrastructure Models

Architecting the Optimal Edge Computing Infrastructure for Your Business

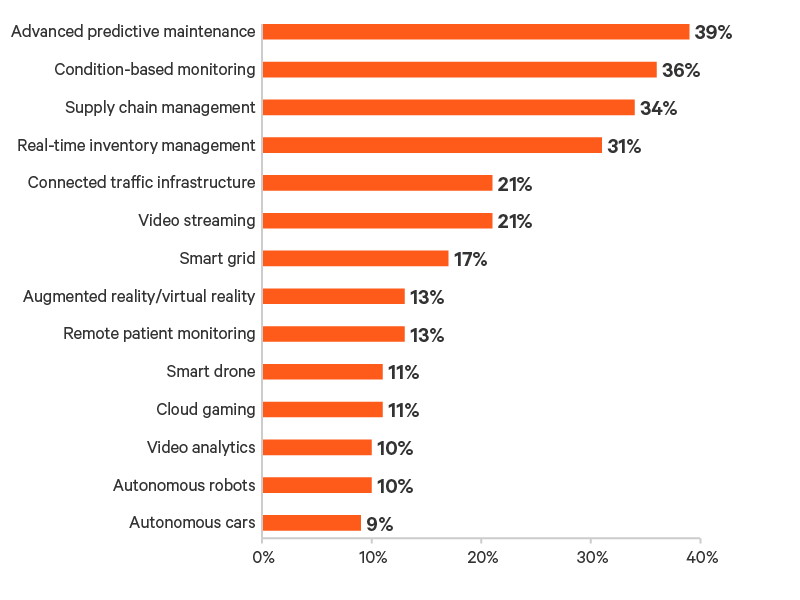

Reducing manufacturing equipment downtime and supply chain optimization were the most popular use cases driving edge investments. Predictive maintenance was the leading use case cited by participants (39%) followed by condition-based maintenance (36%), supply chain management (34%), and real-time inventory management (31%) (Figure 8).

These results reflect the relative maturity of various use cases, as well as the number of sites required to support them. Video streaming, which was cited by 21% of participants as driving edge adoption is one of the most mature edge use cases and likely accounts for higher data volumes today than other uses cases but is generally not as latency sensitive and is typically supported by Regional or Distributed Edge sites. Predictive maintenance and condition-based monitoring are more likely to be supported by Micro Edge sites located close to equipment, resulting in a larger number of sites supporting these use cases. In addition, these use cases can deliver value in any industry where equipment downtime can disrupt operations, including manufacturing, warehousing and distribution, oil and gas production and mining.

Figure 8: Use cases driving adoption of edge computing.

Edge Infrastructure Priorities

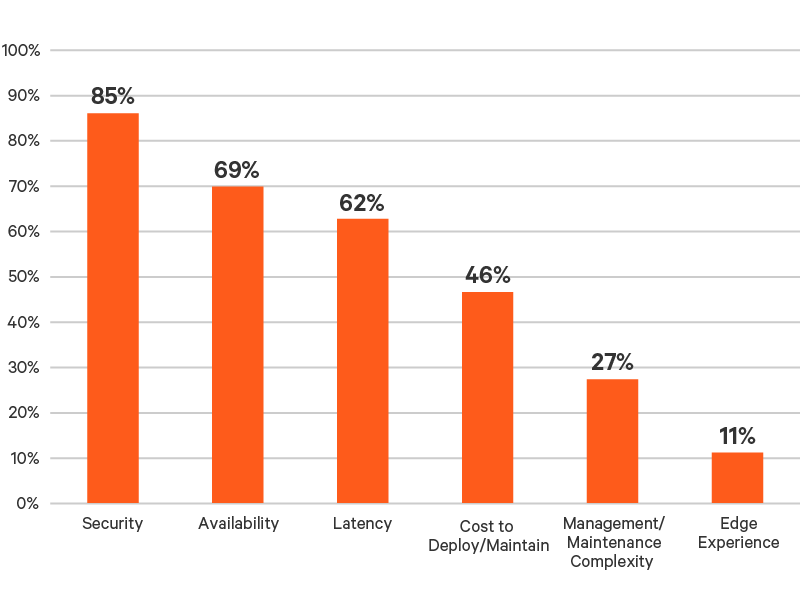

Participants recognize the challenges they face when expanding their edge networks to support these emerging use cases. When asked to prioritize the challenges, 85% put security among their top three challenges, followed by availability at 69% and latency at 62% (Figure 9).

For edge sites, both physical and data security must be considered. By distributing IT resources, edge computing complicates data security management. However, in some use cases, such as healthcare, edge sites can reduce the vulnerability of data by keeping it close to the point of generation and minimizing exposure to threats created by transmitting data to the cloud. In all cases, lockable racks and enclosures can be used to protect the physical security of IT assets from unauthorized access.

Integrated systems, in which all infrastructure is installed in racks or enclosures at the factory, generally provide this level of physical security and can also be configured with sensors that generate alerts when the door is opened by unauthorized personnel.

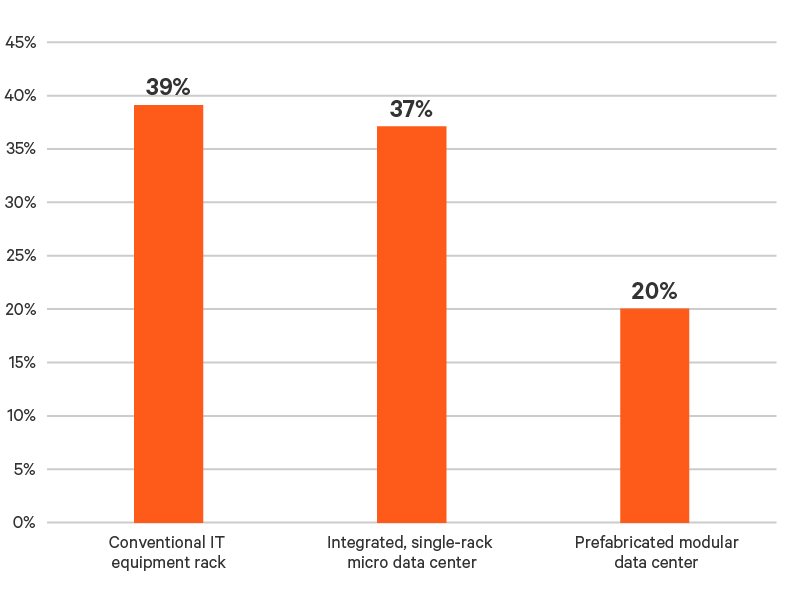

Integrated systems also address availability concerns by ensuring power protection, power distribution, thermal management, and monitoring systems are matched to application requirements. They can even reduce latency by enabling IT to be deployed in environments not designed for it. These systems have the added benefit of reducing deployment times by eliminating the need to “build” systems on site. More than half of participants (57%) are already leveraging factory integration in their edge sites through either single-rack systems or prefabricated data center modules (Figure 10).

Figure 9: How participants prioritize edge computing challenges.

Figure 10: Percent of participants using integrated and modular edge infrastructure solutions.

Sustainability and the Edge

Data center sustainability — reducing emissions, improving resource utilization, and eliminating waste — has become a major priority for IT and data center operations. Participants clearly see an opportunity to extend the current focus on data center sustainability to the edge.

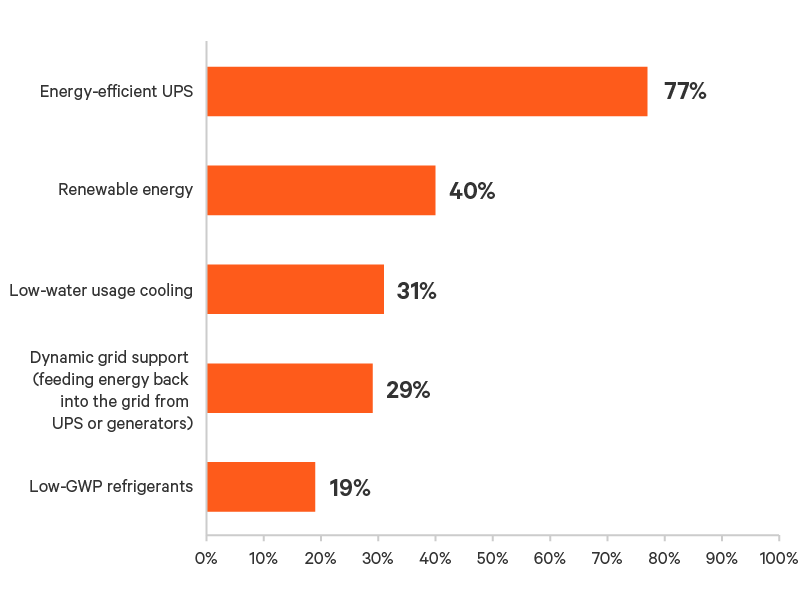

The easiest way to enhance edge operating efficiency is to deploy energy-efficient technologies. More than three-quarters of participants (77%) have deployed or are planning to deploy energy-efficient UPS systems to support their edge sites. New mid-size double-conversion UPS systems can achieve operating efficiencies above 98% through dynamic online optimization that reduces energy losses associated with power conditioning when utility power is acceptable. They protect equipment when incoming power quality degrades by seamlessly transferring to double-conversion mode.

Participants are also going beyond energy-efficient technologies and embracing more disruptive sustainability technologies such as renewable energy and dynamic grid support. For thermal management on the edge, water-efficient and low-GWP technologies appear to be gaining traction (Figure 11).

Figure 11: Technologies being deployed to enhance edge sustainability.

Key Takeaways

While there is much hype about the “edge revolution,” the reality today is that the migration to edge computing is more of an evolution. Organizations are expanding their use of edge computing, but they are doing so in a controlled and managed way that is driving a gradual transition from the legacy edge to the purpose-built edge.

This is not to say that the changes occurring are not significant. This shift in IT resources to the edge and the cloud is significant and could have widespread implications for the ability to support new applications, create new customer experiences, and effectively manage limited IT resources. In this context, the pace of change documented by the survey seems to appropriately balance the desire to capitalize on emerging use cases while minimizing disruption to operations.

The survey does raise some concerns about whether organizations can effectively support edge computing sites that achieve the level of availability emerging use cases may require.

Thermal management practices, in particular, must evolve to account for the higher power requirements of current and future sites. Relying on comfort cooling for sites above 2 kW has proven inadequate, as these systems lack the precision, capacity, and reliability IT systems require. Greater use of precision cooling technologies, which have adapted to the growth in edge computing through edge-specific designs, will be required to deliver the availability operators expect from their edge sites.

Management practices also need to mature. Secure remote access and monitoring technologies are well established and provide visibility into, and control over, edge infrastructure and IT systems. They enable early warning of potential issues, such as heat build-up, and enable remote troubleshooting of IT systems. These systems also create the foundation for applying predictive maintenance to the edge computing sites supporting emerging use cases, which was the most popular edge use case identified by this survey.

Participant Profile

Participating Industries

Participants in the survey represented a range of industries, validating the broad applicability of edge computing. Industries with the significant representation are shown in Figure 12. Industries with less than 3% representation are aggregated into the “All other industries” category and include aerospace, broadcast and entertainment, healthcare, military/defense, power/gas transmission and distribution, and water supply/sewage (Figure 12).

Figure 12: Industries represented in survey.

Distribution of Participants by Region

The global What’s Your Edge survey included participants from all major regions of the world (Figure 13).

Figure 13: Regional distribution of survey participants.

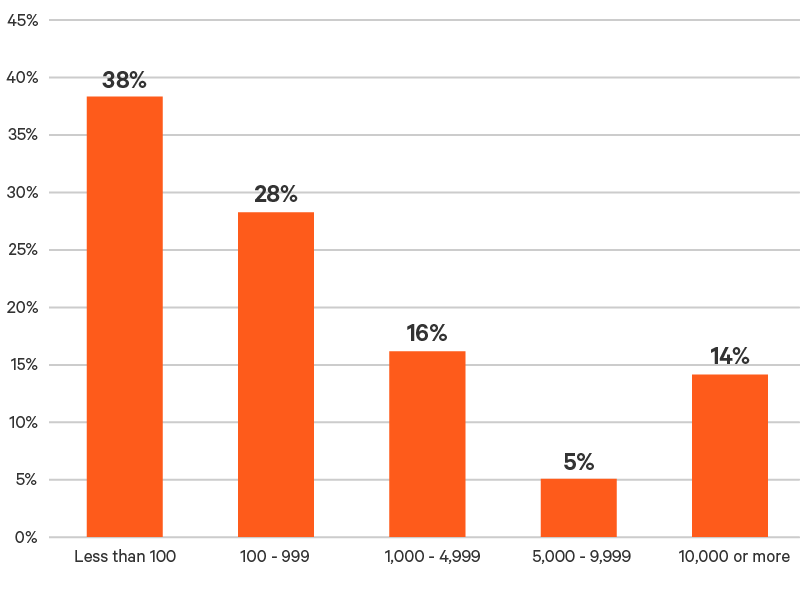

Size of Organization

Edge computing isn’t just being deployed by large organizations. Smaller organizations are also finding value in edge computing. It’s also important to remember that organization size as measured by number of employees doesn’t necessarily correlate with the scale of IT resources deployed. Colocation providers may have low headcounts but large IT networks (Figure 14).

Figure 14: Size of organizations participating in survey as measured by number of employees.