Data center operators are evaluating liquid cooling technologies to increase energy efficiency as processing-intensive computing applications grow. According to the Dell’Oro Group, the liquid cooling market revenue approach $2B by 2027 with a 60% CAGR for the years 2020 to 2027, as organizations adopt more cloud services, use artificial intelligence (AI) to power advanced analytics and automated decision making and enable blockchain and cryptocurrency applications.

AI Hardware Implications for Thermal Management, © 2023 Dell’Oro Group

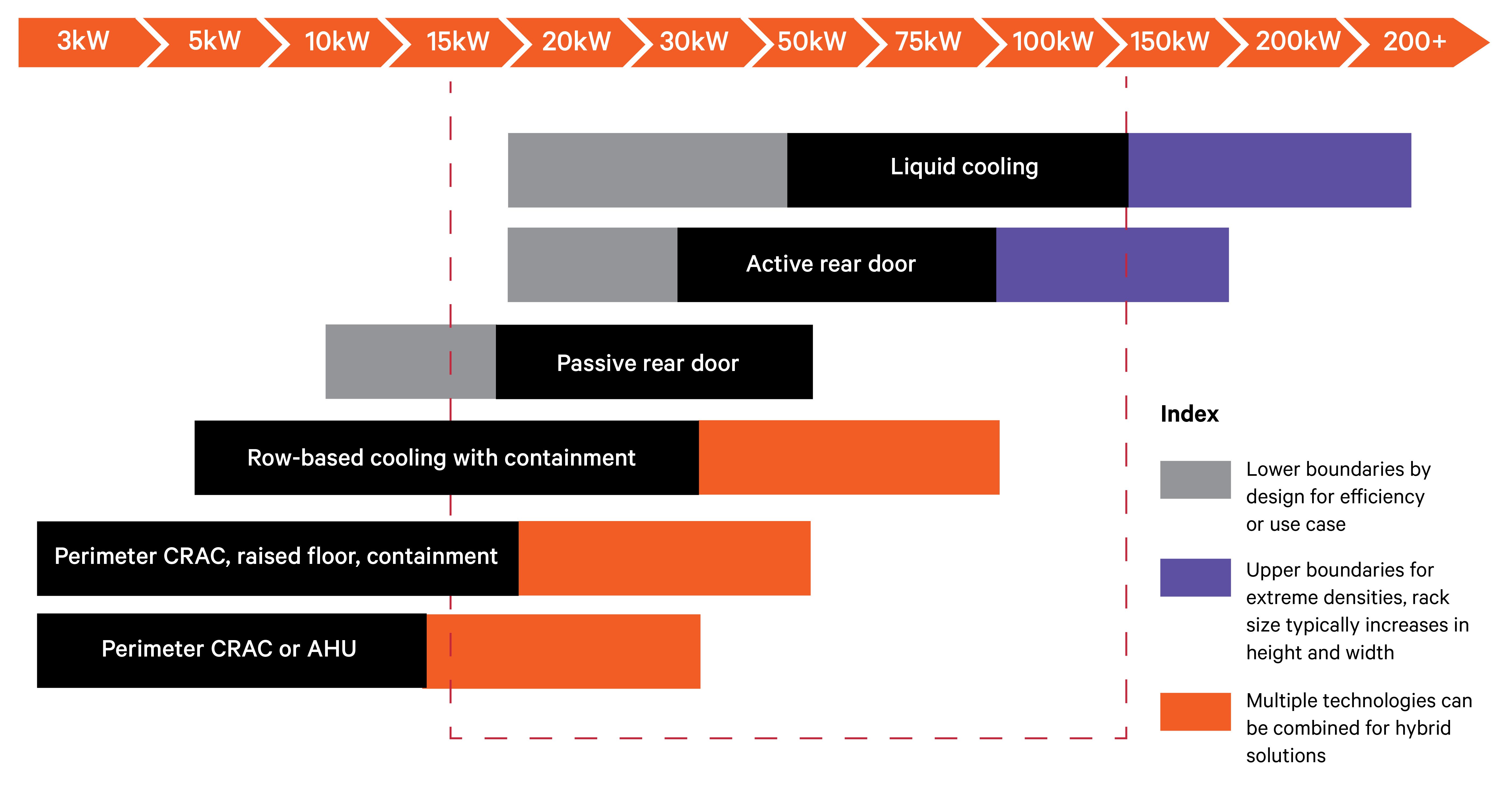

Currently, data centers support rack power requirements in excess of 20 kilowatts (kW), but the market is headed to 50 kW or more. Newer-generation central processing units (CPUs) and graphics processing units (GPUs) have higher thermal density properties than previous-generation architectures. In addition, server manufacturers are packing more CPUs and GPUs into each rack to meet the accelerating demand for high-performance computing and AI applications.

Air processing is now showing its limits. Traditional air cooling can’t cool these high-density racks efficiently and sustainably.

As a result, data center operators are investigating their liquid cooling options. Liquid cooling leverages the higher thermal transfer properties of water or other fluids to support efficient and cost-effective cooling of high-density racks and can be up to 3000 times more effective than using air. Long proven for mainframe and gaming applications, liquid cooling is expanding to protect rack-mounted servers in data centers worldwide. Vertiv has created a wide array of resources to help you understand the challenges, opportunities, and technical requirements that liquid cooling presents. These resources will help you decide how to apply and scale liquid cooling across your data center footprint.

DCD> Keeping IT Cool: HPC

Watch “Is direct-to-chip cooling leading the pack?”

This webinar reviews the current state of cold plate technology and explores the reasons behind its adoption, and the fundamental role it will play in the cooling needs of the chips of the future. Beyond its functionality for superior heat transfer, direct-to-chip systems also boast the ability of easier retrofitting, unlike their immersion counterpart. So, could this lead to a more streamlined rollout, a more incremental adoption of technology in a change-phobic industry, and a better ROI? Furthermore, this session will question if, and how long, it could be until we see cold plate technologies being deployed at scale.

Is direct to chip cooling leading the pack?

Understanding Liquid Cooling Options and Performance

Data center operators are pursuing one of three paths with liquid cooling. They’re developing liquid-only data centers, future-proofing air-cooled facilities with new infrastructure to support liquid-cooled racks in the future and integrating liquid cooling in current air-cooled facilities that lack the infrastructure to support it. Most will likely choose the latter path, to gain capacity that meets near-term business needs and provides rapid return on investment.

Installing liquid cooling can get complicated. Data center teams will want to work with a partner to consider key issues, including plumbing requirements, cooling distribution, balancing capacity, risk mitigation strategies and heat rejection systems.

Your options for liquid cooling include:

-

Rear-door heat exchangers – Passive or active heat exchangers replace the rear door of the IT equipment rack with a liquid heat exchanger. These systems can be used in conjunction with air-cooling systems to cool environments with mixed rack densities.

-

Direct-to-chip liquid cooling – Direct-to-chip cold plates sit atop the board’s heat-generating components to draw off heat through single-phase cold plates or two-phase evaporation units. These cooling technologies can remove about 70-75% of the heat generated by the equipment in the rack, leaving 25-30% that must be removed by air-cooling systems.

-

Immersion cooling – Single-phase and two-phase immersion cooling systems submerge servers and other components in the rack in a thermally conductive dielectric liquid or fluid, eliminating the need for air cooling. This approach maximizes the thermal transfer properties of liquid and is the most energy-efficient form of liquid cooling on the market.

6 Things to Consider When Introducing Liquid Cooling into Air-Cooled Data Centers

While liquid-only data centers are being developed and some new air-cooled data centers are being designed to accommodate liquid-cooled racks in the future, the most common scenario operators face today is integrating liquid cooling into existing air-cooled facilities that lack the infrastructure to support it.

This can, unsurprisingly, get complicated. If you’re considering bringing liquid into an air-cooled data center, here are some key issues you should be prepared to address.

The type of fluid used, and the heat load-to-liquid ratio, significantly influence the overall system design of a hybrid facility. Higher heat-to-liquid ratios reduce the demand on air cooling infrastructure. The variables of heat load, liquid flow rates, and pressure work together to contribute to the overall liquid cooling solution and should be considered early in the process of bringing liquid to the rack.

The most fundamental component of liquid cooling infrastructure — the piping that carries the cooling fluid to the rack — can also be the most challenging to deploy within an existing facility.

In most cases, a phased approach is required to minimize disruption to operations. In colocation, operators add plumbing to one or two suites in response to known customer requirements. As customer demands increase, they’ll expand to additional suites. The same approach is being applied in the enterprise where a corner section of the data center may be devoted to liquid-cooled racks.

For raised floor data centers, poorly planned piping runs can obstruct airflow. Computational fluid dynamics (CFD) simulations should be used to configure piping to minimize the impact on airflow through the floor.

In slab data centers, piping is generally run over aisles and supported ceiling structure with drip pans under all fittings to minimize the impact of potential leaks. Wetted material compatibility and choosing the right type of fittings are also critical to the long-term success of a liquid cooling deployment.

Distribution

With liquid cooling, you need to establish a secondary cooling loop in the facility that allows precise control of the liquid being distributed to the rack. The key component in this loop is the coolant distribution unit (CDU). The CDU provides temperature and flow rate control and the ability to maintain liquid hygiene by using filtration to capture debris.

For smaller projects, a CDU with a liquid-to-air heat exchanger can simplify deployment, assuming the air-cooling system can handle the heat rejected from the CDU. In most cases, the CDU will use a liquid-to-liquid heat exchanger to capture the heat returned from the racks and reject it through the chilled water system. While CDUs can be positioned on the data center's perimeter, most units are designed to fit within the row so they can be located in proximity to the racks they support.

Vertiv™ Liebert® XDU Coolant Distribution Unit functions as a liquid-to-air heat exchanger for cooling chips.

Balancing capacity

The most commonly used liquid cooling methods being deployed today, rear-door heat exchangers and direct-to-chip cold plates, work with air cooling systems rather than independently. Immersion cooling, both single- and two-phase, is also making headway.

You’ll need to determine how much of the total heat load each system will handle, how much air-cooling capacity the liquid system will displace, and where the liquid cooling system may be introducing new demands on air-cooling systems. Rear-door heat exchangers, for example, expel cooled air into the data center, and the air-cooling system must be able to handle the heat from the rack if one or more rear doors are open for service. In rear-door applications, a chiller is typically used to achieve desired water temperatures.

Designed to remove barriers to liquid cooling implementation in an air-cooled environment, the Liquid Cooling-Ready Vertiv Liebert AFC chillers are designed to allow the simultaneous management of air-cooling and liquid cooling, integrating indoor CRAH units and CDU units and smoothly transitioning to a Liquid Cooling Data Center.

The biggest obstacle to the growth of liquid cooling has been concerns over the risks associated with moving liquid to the rack. Today's liquid cooled systems minimize this risk by limiting the volume of fluids being distributed and integrating leak detection technology in system components and at critical locations across the piping system.

When dielectric fluids are used, the risk of equipment damage from leaks is removed, but the high cost of these fluids justifies the inclusion of similar leak detection systems as would be used in water-based systems. The Open Compute Project has released an excellent paper on leak detection technologies and strategies, Leak Detection and Integration, which is recommended reading for anyone bringing liquid into their data center.

Heat recovery can increase the efficiency of the chiller water system by allowing heat captured from the data center to be reused for other purposes. Instead of cooling the heat load, heat is effectively captured by the system and can be used to meet heating demand in other parts of the building, neighboring buildings, or a district heating network. This strategy can be applied to legacy data centers, even when captured heat temperatures are low by using a heat pump to increase the temperature.

Introducing liquid cooling into an air-cooled data center requires careful planning and engineering, but the technologies and best practices are available today to support a successful and minimally disruptive deployment.

Download Vertiv's whitepaper, Understanding Data Center Liquid Cooling Options and Infrastructure Requirements to learn more.

How to Calculate the Impact of Liquid Cooling on Efficiency

As stated above, the adoption of data center liquid cooling continues to gain momentum based on its ability to deliver more efficient and effective cooling of high-density IT racks. Yet, data center designers and operators have lacked data that could be used to project the impact of liquid cooling on data center efficiency and help them optimize the deployment of liquid cooling for energy savings and efficiency.

To fill that void, a team of specialists from NVIDIA and Vertiv conducted the first major analysis of the impact of liquid cooling on data center PUE and energy consumption. The full analysis was published by the American Society of Mechanical Engineers (ASME) in the paper, Power Usage Effectiveness Analysis of a High-Density Air-Liquid Hybrid Cooled Data Center.

Key Takeaways From the Data Center Liquid Cooling Energy Efficiency Analysis

Power Usage Effectiveness

PUE is not a good measure of data center liquid cooling efficiency. Unlike air cooling, liquid cooling affects the numerator (total data center power) and the denominator (IT equipment power) in the PUE calculation, making it ineffective for comparing the efficiency of liquid and air-cooling systems.

Total Usage Effectiveness

- Alternate metrics such as TUE (Total Usage Effectiveness) will prove more helpful in guiding design decisions related to the introduction of liquid cooling in an air-cooled data center.

- TUE = ITUE x PUE (ITUE = Total Energy Into the IT Equipment/Total Energy into the Compute Components)

- Or TUE = Total Power to the Data Center/Total Power to Compute, Processing, and Storage Components

In high-density data centers, liquid cooling improves the energy efficiency of IT and facility systems compared to air cooling. In our fully optimized study, the introduction of liquid cooling created a 10.2% reduction in total data center power and a more than 15% improvement in TUE.

Higher efficiency with Liquid Cooling Technologies

Maximizing the data center liquid cooling implementation — in terms of the percent of the IT load cooled by liquid — delivers the highest efficiency. With direct-to-chip cooling, it isn’t possible to cool the entire load with liquid, but approximately 75% of the load can be effectively cooled by direct-to-chip liquid cooling.

Liquid cooling can enable higher chilled water, supply air, and secondary inlet temperatures that maximize the efficiency of facility infrastructure. Hot water cooling, in particular, should be considered. Secondary inlet temperatures in our final study were raised to 45 C (113 F), and this contributed to the results achieved while also increasing opportunities for waste heat reuse.

Data Center Cooling Solutions: Introduce Liquids with Confidence

To learn more about confidently introducing liquid cooling into your data center watch this video

Options for Hybrid Air/Liquid-cooling Systems and Fully Liquid-Cooled Data Centers

Wherever you are on your liquid cooling journey, Vertiv offers solutions and services to help you achieve your business goals and technical requirements.

As a global leader in thermal management, Vertiv brings a holistic approach to the liquid-cooled facility. Our solutions are based on years of research and development in collaboration with the Center for Energy-Smart Electronic Systems (ES2) partner universities, The Green Grid and Open Compute Project, and Green Revolution Cooling.

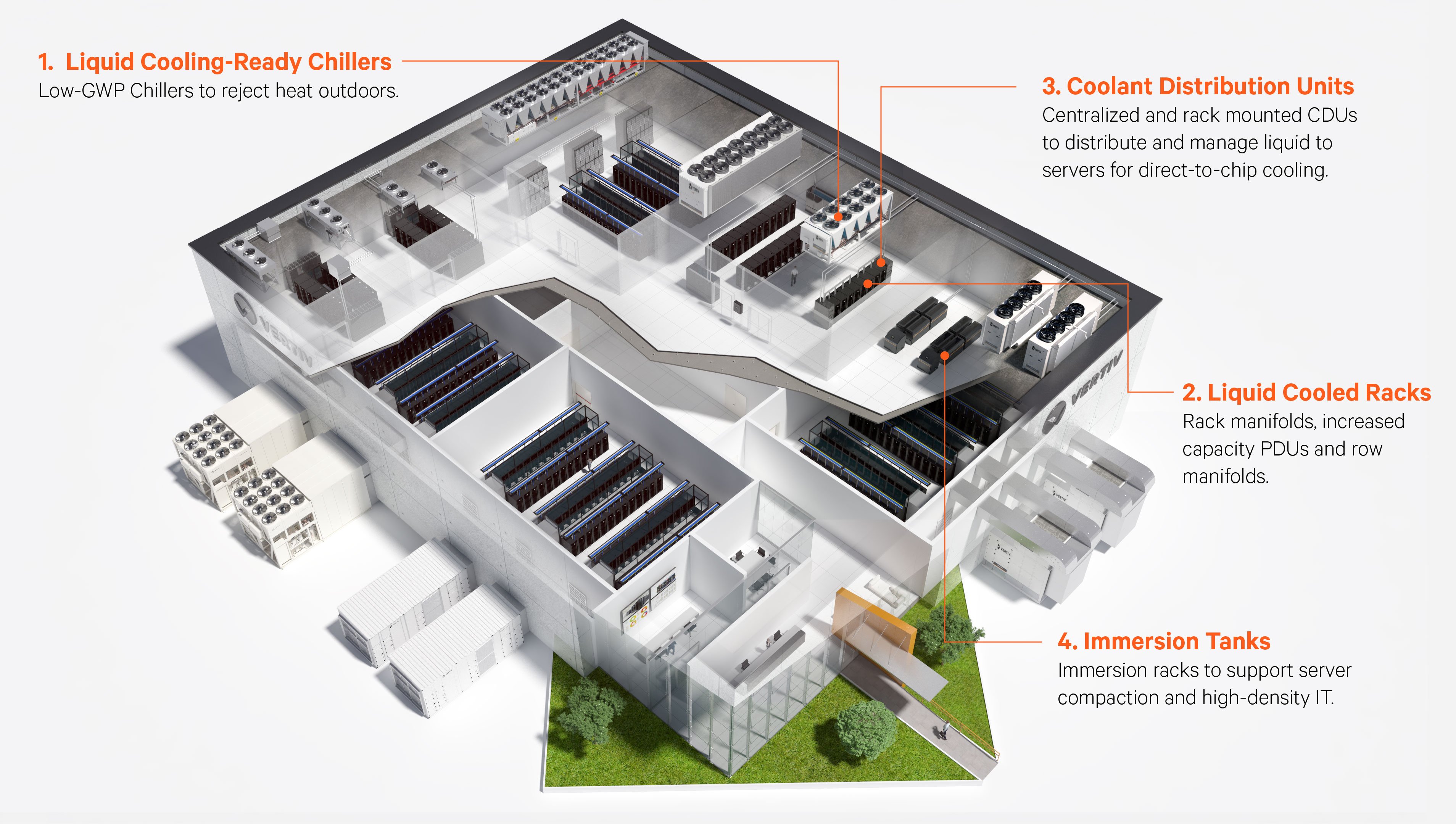

Through these efforts, and our intensive liquid cooling R&D program, Vertiv keeps pace with changing customer requirements. We deliver a portfolio of solutions that support hybrid air- and liquid-cooling, as well as fully liquid-cooled data centers, including:

- Coolant distribution units (CDUs) and chillers engineered to provide complete infrastructure solutions for data center liquid cooling

- Active and passive rear-door heat exchangers

- Heat rejection systems designed to work with liquid cooling CDUs and chillers

- Retrofit solutions that enable air cooling equipment to be modified to support liquid cooling

- Established practices and services for commissioning, startup, and operation of liquid cooling infrastructure

Market Insight Report Reprint from S&P Global Market Intelligence

Vertiv supports liquid cooling for high-density computing with new coolant distribution unit